We built the first non-trivial UX demo of Agentic Web

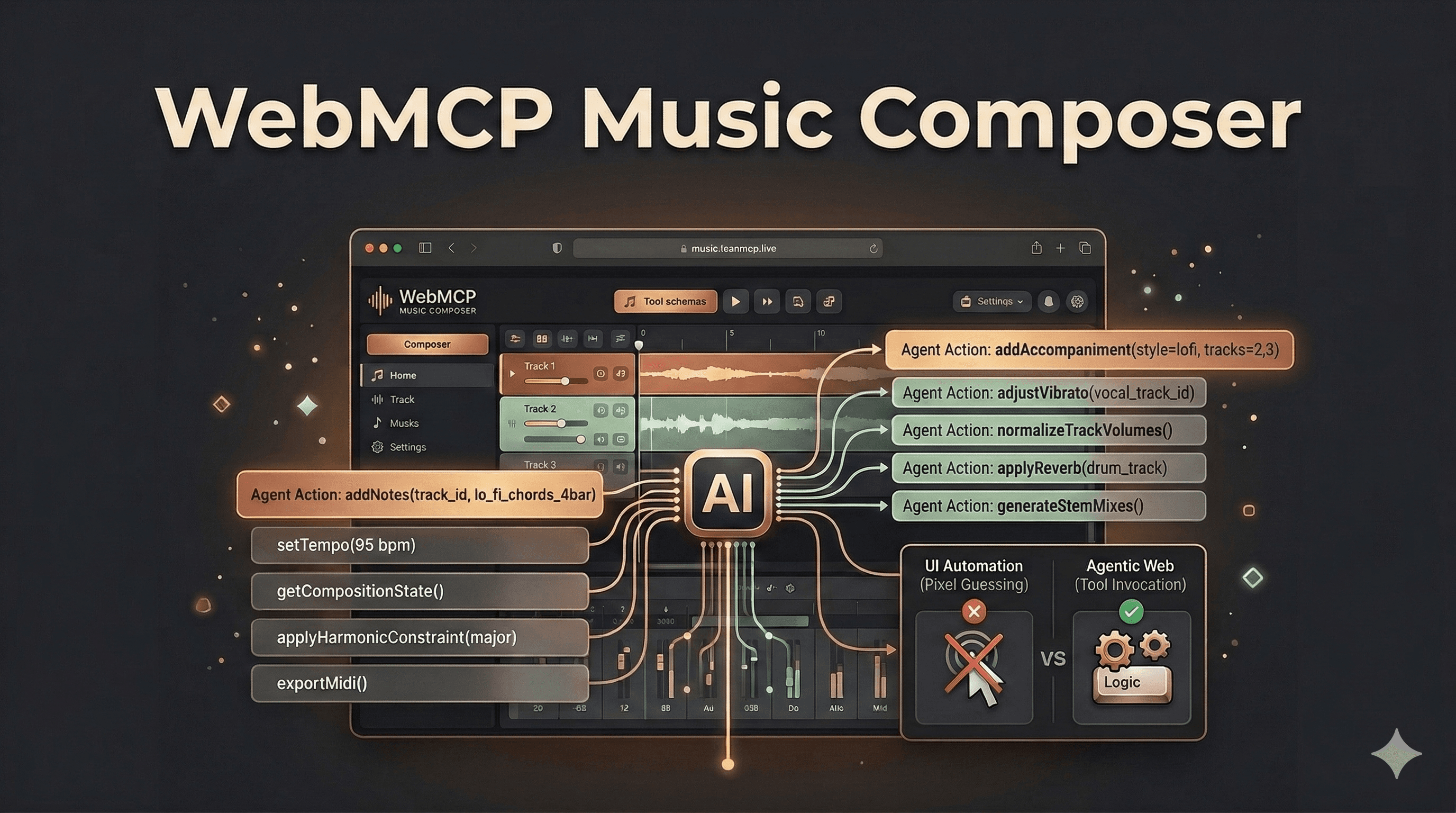

Most "AI on the web" demos currently making the rounds share a dirty little secret: they are fundamentally just UI automation. The agent looks at pixels, guesses what the interface means, and then clicks around until something works.

Most "AI on the web" demos currently making the rounds share a dirty little secret: they are fundamentally just UI automation. The agent looks at pixels, guesses what the interface means, and then clicks around until something works.

While flashy for a Twitter video, pixel-guessing is a brittle foundation. For production workflows, you need iteration, repeatability, and precise control.

Enter the WebMCP Music Composer.

This demo is interesting for a completely different reason: it demonstrates an AI agent composing music by calling tools the web app explicitly exposes from inside the browser context. In other words, the web page doesn’t just render a UI for a human; it publishes its capabilities for an agent. The agent doesn’t "use the UI"- it invokes functions.

This establishes a fundamentally better, more reliable contract for agentic interaction.

Try it live: music.leanmcp.live

Why a Music Sequencer is the Ultimate Stress Test

Building a music composition tool looks "fun" on the surface, but architecturally, it’s brutal - in the best way possible. It is the perfect crucible for testing agentic capabilities because it is:

- Stateful: Once you add a note, every subsequent decision depends on it.

- Iterative: You don’t want one-shot output. You want refine, listen, and edit loops.

- Constraint-driven: It requires navigating timing grids, note lengths, track instruments, tempo, and harmony rules.

- Multi-step: Prompting an agent to "make a lo-fi beat" actually translates to 30–200 micro-actions under the hood.

If an agent can reliably drive a sequencer through hundreds of tool calls, we aren’t just proving that "LLMs can do music." We are proving something exponentially more valuable: You can model a complex UI workflow as a stable, inspectable set of capabilities - and an agent can operate it deterministically. That’s what most web agent demos claim to show. This one actually does.

What the Community is Saying

The response to this architectural shift has been incredible. We've been thrilled to see the WebMCP Music Composer catch the attention of the engineers and product thinkers who are pioneering the agentic web.

Here is what people are saying about the demo:

"Incredible demo! I love how you demonstrated it with non-trivial UX. We built WebMCP to make these kinds of seamless agentic interactions possible, and you’re helping show just how powerful it can be." - Andrew Nolan, Microsoft

"Wow. A beautiful demo of something other than making bookings and scheduling appointments!" - u/nucleustt, Reddit

"Holy shit this is awesome" - u/naseemalnaji-mcpcat, Reddit

The Core Product Lesson: Design Your Tool Surface Like an API, Not a UI

If you want to extract individual value from this project, something you can take and copy into your own agent-enabled web applications today - ignore the music for a second. Instead, study the implied design pattern.

1. Expose "Domain Actions," Not "UI Actions"

When building for agents, your tools should reflect the underlying domain, not the visual interface.

Bad tools (UI-shaped):

clickPlayButton()openSettingsModal()dragNoteToBar(12)

Good tools (Domain-shaped):

setTempo(bpm)addTrack(instrument)addNotes(trackId, notes[])getCompositionState()

Domain tools are stable under UI redesigns; UI tools are not. A sequencer's UI could change completely—from a piano roll to a step grid to traditional sheet music notation—but the domain tools remain consistent. That is what makes the agent integration durable.

2. Keep Tools Small, Composable, and Predictable

Music creation, like most complex work, is a chain of small decisions: choosing a tempo, laying down a chord progression, adding drums, weaving in a melody, and tweaking variations.

If your exposed tool is simply composeSong(style), the agent cannot steer. It cannot recover from mistakes. It cannot edit. By making tools granular, you empower the agent to do exactly what humans do: iterate.

3. Tool Schemas are the New "Agent UX"

In classic software development, UX consists of buttons, layout, and visual affordances.

In agentic software, schemas are the affordances. Your schema design is your user experience. A world-class agent UX requires:

- Crystal clear parameter descriptions

- Strict constraints (ranges, enums)

- Smart defaults

- Explicit, actionable error messages

A well-designed schema prevents the model from doing dumb things in the first place. Structuring your app this way is always cheaper than trying to fix a hallucinated UI click after the fact.

The Future of the Web is a Collaborative Interface

We are moving rapidly past the era of screen-scraping and DOM-guessing. The next generation of web applications won't just be usable by humans; they will be inherently navigable by AI.

The WebMCP Music Composer proves that when we stop treating agents like glorified macro-recorders and start treating them like API clients, we unlock workflows that are actually reliable enough for production. By adopting protocols like WebMCP and designing your tool surfaces as domain actions, you future-proof your product for the agentic era.

Ultimately, we believe the future of the web isn't about AI replacing human interaction. It is about a seamless, collaborative interface where humans and agents work side-by-side. The web is evolving into a shared workspace—pixels and visual feedback for human creativity, combined with structured, reliable tool schemas for agentic execution.

Stop building brittle pixel-clickers. Start building collaborative capabilities.

- Try the Music Composer: music.leanmcp.live

- Step further into the future: For an even more immersive look into how this human-agent collaboration works in real-time, try out supermario.leanmcp.live

Join the conversation: